Sample Complexity of Forecast Aggregation

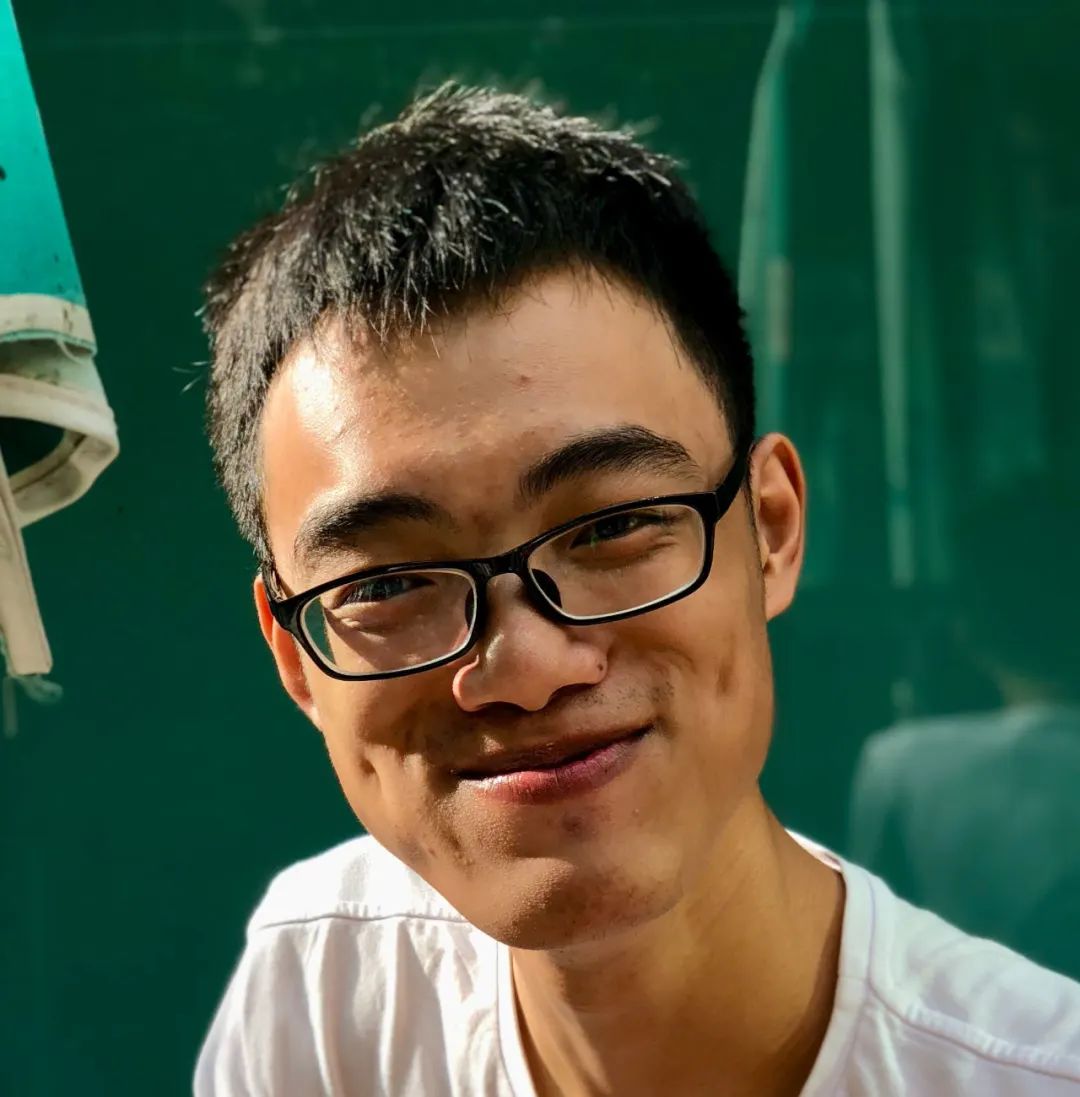

- Tao Lin, Harvard University

- Time: 2023-05-30 16:00

- Host: Turing Class Research Committee

- Venue: Room 204, Courtyard No.5, Jingyuan

Abstract

Suppose you want to know whether it will rain tomorrow. Alice says that the probability of raining tomorrow is 40%; Bob says 85%; Carrol says 65%. How should you aggregate those different predictions into a single one?

This is an example of the Bayesian forecast aggregation problem: n experts each observe private signals about an unknown event and report their posterior beliefs about the event to a principal, who then aggregates the reports into a single prediction for the event. The signals of the experts and the outcome of the event follow a joint distribution. The joint distribution is usually unknown to the principal, but in many repeated forecasting settings the principal has access to i.i.d. "samples" from the distribution, where each sample is a tuple of the experts' reports (not signals) and the realization of the event in the past. Using these samples, the principal aims to find an -approximately optimal aggregator, where optimality is measured in terms of the expected squared distance between the aggregated prediction and the realization of the event.

The proof of our main result involves a novel reduction to a classical learning problem: learning discrete distributions in total variation distance. This reduction reveals the surprising fact that forecast aggregation is essentially as difficult as distribution learning.

Link to paper: https://arxiv.org/abs/2207.13126

Biography

Tao Lin is a third-year PhD student in Computer Science at Harvard University, advised by Prof. Yiling Chen, and was an undergraduate student from the first Turing class at Peking University, advised by Prof. Xiaotie Deng. His research interest lies in the intersection between economics and computer science, in particular, "mechanism design + machine learning" and "information design + machine learning".